Most organizations don’t struggle with AI ambition – they struggle with execution. AI agents present an extraordinary opportunity to drive innovation and competitive advantage, but initiatives often stall due to misalignment, complexity, and a lack of understanding around what agents can really do. The result is an estimated 95% of agentic AI projects failing to deliver ROI. This step-by-step blueprint provides a disciplined path from AI concept to rigorously scoped, testable agentic prototype that aligns to organizational goals, integrates with systems, and includes guardrails right from the outset.

The pressure to deliver quick wins with AI can result in organizations jumping into agentic projects before properly aligning on requirements, workflow, success criteria, and risk tolerance. Low-code/no-code tools support the misconception that agents are a quick and easy build. But when teams skip the fundamentals, the result is agents that may demo well but hide serious liabilities, including security and compliance gaps, untraceable decisions, and runaway cost. A disciplined framework that applies engineering best practices will force the right decisions early to produce an agent that is safe, governable, and scalable.

1. Talk is cheap. Agents create value only when they can act.

AI agents must be designed with clear instructions, the right tools, data, and models matched to the task. Without these foundations, it will be impossible to achieve intelligence or scalability. Instead, the result will be an agent that may be able to talk about the work but can’t deliver it.

2. Failure frequently starts with a poor understanding of workflow.

Teams often drop agents into workflows they don’t understand and expect intelligence to compensate for poor design. Real value comes from identifying where reasoning and judgment matter most and then transforming the workflow, rather than automating broken steps.

3. No guardrails equals no governance – and unlimited risk.

Without proper guardrails, governance, and tuning, agents can deadlock or spiral out of control. To avoid agents acting in ways that create real exposure, define your enterprise risks and agent boundaries upfront, including data privacy and content safety, regulatory, financial, and brand reputation.

Use this step-by-step framework to standardize a prototype-to-production pipeline for your AI agents

This research can help you move from idea to working prototype with practical deliverables that include a detailed product requirements document (PRD), a baseline orchestration pattern, a safety and governance checklist, an evaluation framework with meaningful KPIs, and a roadmap that moves from prototype to pilot and into supported operations. The framework follows four phases:

- Business requirements & value alignment: Define the problem, personas, KPIs, current workflow, and prototype scope.

- Agent capabilities & workflow: Map the agentic workflow, pick models and tools, and write clear agent instructions.

- Prototype orchestration & governance: Choose an orchestration pattern and add guardrails and human-in-the-loop controls.

- Agent evaluation criteria & next steps: Define success metrics, set up tracing and observability, test with datasets, and plan next steps to pilot and production.

Member Testimonials

After each Info-Tech experience, we ask our members to quantify the real-time savings, monetary impact, and project improvements our research helped them achieve. See our top member experiences for this blueprint and what our clients have to say.

9.6/10

Overall Impact

$85,091

Average $ Saved

20

Average Days Saved

Client

Experience

Impact

$ Saved

Days Saved

Motion Picture & Television Fund

Guided Implementation

10/10

$2,720

20

Make-A-Wish Foundation of America

Guided Implementation

10/10

$13,600

5

Great insights on Agentic AI, deployment architectures, considerations, etc.

Educational Computing Network of Ontario (ECNO)

Guided Implementation

10/10

N/A

N/A

Broad spectrum of information lots of opportunities for attendees to engage in discussions

USAble Mutual Insurance Co. dba Arkansas Blue Cross and Blue Shield

Workshop

9/10

$68,000

10

Western Alliance Bank

Guided Implementation

10/10

$136K

10

USAble Mutual Insurance Co. dba Arkansas Blue Cross and Blue Shield

Workshop

9/10

$2,720

10

First Command Financial Services

Workshop

9/10

N/A

N/A

The engagement from info-tech was great for this workshop. Both Martin and Jai delivered great content and training to our team.

First Command Financial Services

Workshop

9/10

N/A

20

The best part of the training was its structure: a clear, step-by-step methodology from defining business requirements through evaluation, plus a h... Read More

TechSavvy s.r.o.

Guided Implementation

10/10

$3,179

2

Flexprint, LLC

Workshop

10/10

$544K

120

Best was the structure of the program and the impact that it had on the folks that attended. They had a renewed sense of excitement about being a p... Read More

Carmel Development & Management Co, Inc

Workshop

10/10

$136K

20

The best part is having the whole team understand the true planning process and the controls that go into an effective Agentic AI Prototype and pot... Read More

Al Nahdi Medical

Guided Implementation

10/10

$2,584

2

Meagan explained a very useful framework for building Agents end to end that we can use in our initiatives ..

City Brewing Company, LLC

Workshop

8/10

$13,600

10

Great mix of theory and practice! We went from Agent basics to building real workflows in a Python hackathon. A perfect high-level overview to appr... Read More

Kansas City Chiefs Football Club

Guided Implementation

10/10

$13,600

5

Turned into more of a sales pitch for Info-Tech services than an information sharing session, but it was still useful and there is interest in leve... Read More

Workshop: Design Your Agentic AI Prototype

Workshops offer an easy way to accelerate your project. If you are unable to do the project yourself, and a Guided Implementation isn't enough, we offer low-cost delivery of our project workshops. We take you through every phase of your project and ensure that you have a roadmap in place to complete your project successfully.

Module 1: Define Business Requirements & Align on Value Proposition

The Purpose

Convert business needs into a clear problem statement, success criteria, and scope to ensure a shared definition of “value,” which must inform every design decision.

Key Benefits Achieved

- Clear line of sight between agent opportunities and measurable business impact.

- Defined personas, KPIs and identified constraints that will ensure your agentic AI system will deliver value.

- Finalize your agentic AI prototype scope across business stakeholders and technical teams.

Activities

Outputs

Introduction to agentic AI concepts

Define the core problem statement

Discover key user personas

- Documented problem statement, personas, and KPIs

Document business KPIs with baselines and targets

Map the current-state workflow for the selected use case, identifying reasoning steps and edge cases

- Shared understanding of as-is workflow with reasoning steps and edge cases

Finalize the prototype scope and boundaries

- Defined and agreed upon prototype scope

Module 2: Map Your Agent Capabilities & Workflow

The Purpose

Design how your agents will work, including mapping workflows, decisions, tools, and handoffs between humans and agents.

Key Benefits Achieved

- Visualize your agentic workflow to demonstrate how your agents will function.

- Identify the right models, tools, and instructions for each agent.

- Prepare your developers to build APIs, agents, tools, and outputs in OpenAI.

Activities

Outputs

Introduction to agent workflow design, models, tools, and instructions

OpenAI Developer Crash Course 1: APIs, agents, tools & structured output.

Identify the optimal model for each agent

- Model shortlist for each agent

Define the necessary tools and agent instructions for each agent

- Data, tooling plan, and draft instructions for each agent

Optimize and rationalize agent distribution

- Initial agent workflow

Module 3: Define Your Prototype Orchestration & Governance

The Purpose

Define how agents are orchestrated, governed, and observed by embedding accountability and human oversight by design.

Key Benefits Achieved

- Design agent orchestration with clear controls, guardrails and oversight.

- Clearly identify areas for guardrails and human-in-the-loop requirements.

- Prepare your developers to build guardrails and orchestration patterns in OpenAI.

Activities

Outputs

Introduction to agent orchestration, guardrails, and human-in-the-loop (HITL)

OpenAI Developer Crash Course 3: Orchestration, guardrails, observability, FinOps

Determine the optimized orchestration pattern for the use case

- Documented orchestration pattern for the use case

Identify input, agent, and output risks

- Risk inventory

Document all necessary guardrails and HITL steps

- Input, agent, and output-level guardrails & HITL

- Optimized agent workflow design documented in the PRD

Module 4: Define Your Agent Evaluation Criteria

The Purpose

Establish clear evaluation criteria including metrics, test cases, traceability, and security.

Key Benefits Achieved

- Define what good looks like through clear agent success metrics.

- Establish your evaluation datasets and test criteria, and ensure design traceability.

- Set realistic expectations around next steps for the design finalization and prototype build.

- Prepare your developers to perform evaluations in OpenAI.

Activities

Outputs

Introduction to agent evaluation

OpenAI Developer Crash Course 4: Evaluations

Document agent competencies, success criteria, and metrics

- Agent success criteria, metrics, and tracing requirements

Document agent tracing requirements

Build evaluation datasets to test agents and the system

- Defined evaluation datasets

Determine your experimentation plan & define next steps

- Experimentation plan and next steps

- Finalized PRD

Design Your Agentic AI Prototype

Design agents with engineering best practices.

Design Your Agentic AI Prototype

EXECUTIVE BRIEF

Executive summary

Your Challenge | Common Obstacles | Info-Tech’s Approach |

|---|---|---|

|

|

|

Info-Tech Insight

Building reliable AI agents requires five reinforcing pillars: Strong Foundations, Thoughtful Orchestration, Safety & Guardrails, Continuous Evaluation, and a disciplined Deployment Strategy. Together they enable teams to move from prototype to production with confidence and measurable business impact.

Agentic AI Ambition Is Outpacing Execution Success

- Organizations are under growing pressure to translate AI ambition into operational reality. The path forward requires moving from experimentation to structured, scalable deployment – a shift that introduces both technical and organizational complexity.

- While enthusiasm for generative and autonomous technologies is high, most implementations remain isolated proofs of concept rather than integrated, repeatable capabilities that deliver measurable business impact.

- Sustainable success depends on measurement and repeatability. Clear performance metrics and monitoring systems are needed to continuously evaluate agentic effectiveness, while a structured roadmap must guide how successful use cases are scaled across the enterprise.

Together, these challenges define the central task: transforming promising experimentation into a disciplined, scalable, and governed model for agentic AI adoption.

95% of generative AI projects fail to deliver tangible ROI.

Source: MIT NANDA “GenAI Divide” Report, 2025

Barriers making it difficult to realize value

- The absence of standardized frameworks for orchestration, communication, and memory makes it difficult to build agents that are reliable, interoperable, and maintainable at scale.

- Traditional performance metrics, designed for static models, fail to capture the reasoning depth, autonomy, and collaboration quality that defines agentic behavior. Engagement and adoption don’t reflect whether agents are delivering operational value.

- Data security, privacy, and ethical considerations take on new dimensions when systems can act autonomously, requiring oversight mechanisms that are still emerging across the industry. Operational efficiency compounds the challenge, as early agentic prototypes often consume disproportionate compute resources or introduce latency that undermines real-world viability.

- Mixed perspective on how to design and build agents has led to confusion, and an over confidence in vendors, drag-and-drop solutions, and “vibe coding.” When teams rely on drag-and-drop or vibe coding, solutions may show well in a demo but fail to deliver enterprise-grade value, and struggle to act reliably, safely or at scale.

Ambition vs. Readiness

70% of CIOs that say they’ve adopted AI Agents.

Source: IDC, 2024

90% of CIOs fail to report ROI.

Source: IDC, 2024

Agentic systems go beyond generative AI

3 Levels of Agentic Progression

| 01 | Assist: Human prompts the AI, which then drafts content or uses tools, often with RAG. The human retains control for execution. No autonomous ReACT cycle is completed by the AI. |

|---|---|

| 02 | Single-Agent Autonomy: A single agent plans and acts across various tools based on a human-set goal. Key steps may require human approval, but the agent drives the workflow; execution can be by human or agent. |

| 03 | Multi-Agent Autonomy: Multiple agents collaborate under an orchestrator to achieve complex business outcomes. This system leverages distributed intelligence for execution, with or without human intervention. |

The evolution from generative AI to advanced agentic capabilities involves a progression through increasing levels of autonomy and complexity.

While low code options are a common entry point for single-level agents or assistants. However, as organizations scale to Multi-Agent Systems, and enterprise level workflows These will require much deeper integration, thoughtful design and custom development. This research focuses on how to build those types of Multi-Agent Systems.

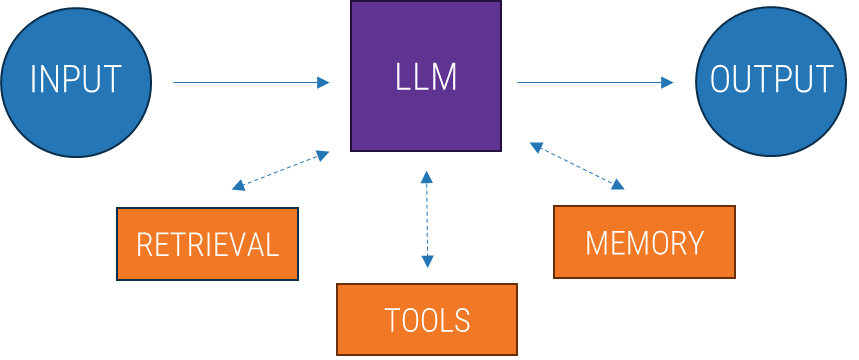

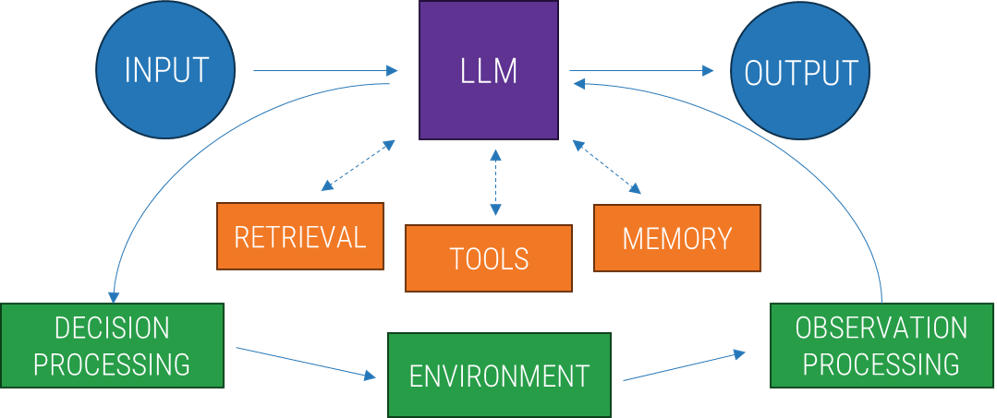

Augmented LLMs are the foundation for autonomization

Adding memory, tools, and data access transforms LLMs from simple chatbots into the building blocks of autonomous systems.

Augmented LLM |

Agents (Autonomization) |

|---|---|

This LLM is enhanced with external capabilities but still works in a linear, input to output flow. The LLM only acts when prompted and its workflow is a single-pass interaction. Process:

| This LLM becomes part of a closed-loop system that allows autonomous decision-making and continuous action without constant human prompting. Process:

|

Agentic AI unlocks new ways for organizations to attain value

Agents excel where traditional rule-based automation fails to handle complex, ambiguous problems. Value-add can be found in scenarios that have:

Complex Decision-Making | Difficult-to-Maintain Rules | Heavy Reliance on Unstructured Data |

|---|---|---|

What an agent does:

How this helps:

Example: Refund approval in customer service workflows | What an agent does:

How this helps:

Example: Performing vendor security reviews | What an agent does:

How this helps:

Example: Processing a home insurance claim |

Agents extend beyond traditional automation software

AI agents move beyond traditional automation by applying context and reasoning to complete tasks on your behalf.

Aspect | Traditional Software | AI Agents |

|---|---|---|

Logic flow | Predetermined if/then branches | Dynamic reasoning based on context |

Input handling | Structured, validated fields | Natural-language understanding |

Adaptability | Code changes required | Learns from instructions/examples |

Error handling | Specific error codes | Contextual recovery strategies |

User interaction | Forms, buttons, static APIs | Conversational and intuitive |

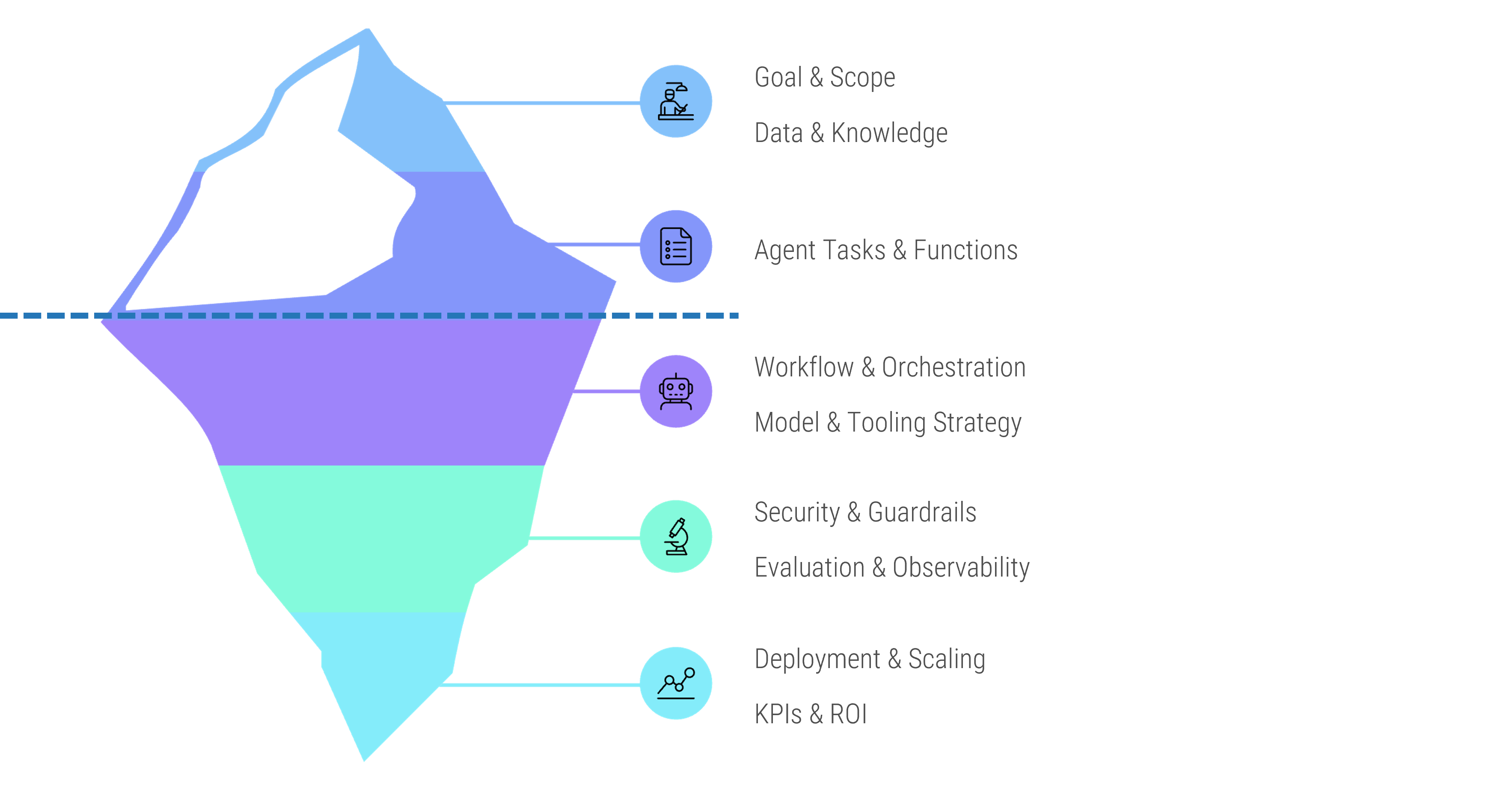

Agents fail when organizations focus on the surface and not the system

While users often perceive the simple interface and outputs of an AI agent as the “tip of the iceberg” a vast and complex infrastructure operates beneath the surface. If the agent can’t reason, extract, and act precisely, it can’t deliver business value.

Under the surface, an agent’s foundation includes:

- Sophisticated model orchestration

- Seamless tool integration

- Robust safety systems

- Continuous monitoring

- Significant operational complexity

Info-Tech Insight

Most agent deployment failures stem from overestimating the simplicity and speed of reaching production. True value comes from meticulous design and robust integration.

Info-Tech’s approach to agent design reduces risks that commonly derail agent projects

Six Failure Patterns of Agentic AI Design

| 01. | Process Blindness Attempting to automate a workflow that is poorly documented, inconsistent, or lacks a clear logic owner. Instead: Document and map the “as-is” workflow and all its exceptions before the implementation. | 02. | Integration Gaps Building an agent in a sandbox without verifying if it can access required APIs or databases in a live environment. Instead: Conduct a technical "plumbing" check early to ensure the agent has authenticated access to every tool it needs. |

|---|---|---|---|

| 03. | Governance Too Late Treating Security, Legal, and Compliance as a "final check," leading to projects being blocked right before launch. Instead: Embed "Privacy by Design" and Human-in-the-Loop (HITL) checkpoints from the requirements phase. | 04. | Unclear “Good” Failing to define what a "correct" answer looks like, making it impossible to measure if the agent is effective. Instead: Establish success metrics (e.g. 90% accuracy on queries) and test datasets before deployment. |

| 05. | Lack of Observability Running agents where you can see the final output but have no way to trace the steps the agent took to get there. Instead: Implement comprehensive traceability from day one. | 06. | Cost Surprises Failing to account for how "loops" and high token usage in complex agentic workflows can lead to exponential API costs. Instead: Set strict token limits, "max-turn" guards, and real-time cost monitoring for all production agents. |

Info-Tech’s methodology to design an agentic prototype

1. Business Requirements & Value Alignment | 2. Map Your Agent Capabilities & Workflow | 3. Define Your Prototype Orchestration & Governance | 4. Define Your Agent Evaluation Criteria & Next Steps | |

|---|---|---|---|---|

Phase Steps | 1.1 Define your problem statement 1.2 Outline personas 1.3 Set KPIs 1.4 Map current workflow 1.5 Determine prototype scope | 2.1 Design an agentic workflow 2.2 Select agent model(s) 2.3 Specify agent tools 2.4 Author agent instructions 2.5 Optimize agent distribution | 3.1 Select the orchestration strategy 3.2 Identify risks 3.3 Define guardrails & human-in-the-loop 3.4 Design agentic workflow (with guardrails & human-in-the-loop) | 4.1 Define success criteria & metrics 4.2 Plan for tracing & observability 4.3 Create evaluation datasets 4.4 Determine next steps |

Phase Outcomes |

|

|

|

|

Insight summary

Overarching Insight

Designing reliable AI agents requires five reinforcing pillars: Clear Business Value, Strong Foundations, Thoughtful Orchestration, Safety & Guardrails, and Continuous Evaluation. Together they enable teams to move from prototype to production with confidence and measurable business impact.

Most failures start with a poor understanding of workflow.

Teams often drop agents into workflows they don’t understand and expect intelligence to compensate for poor design. Real value comes from identifying where reasoning and judgement matter most and transforming the workflow, not from automating broken steps faster.

Agents create value only when they can act.

In order to act, AI agents need to be designed with clear instructions, the right tools, and models matched to the task. Without these foundations you don’t get intelligence or scale – you end up with something that may be able to talk about the work but can’t deliver.

Multiple specialized agents are more reliable than one general-purpose agent.

When each agent has a narrow role and clear boundaries, the system handles edge cases more consistently. This is how organizations scale people. Agents work the same way.

No guardrails means no governance and unlimited risk.

Agents can deadlock or spiral out of control without proper guardrails, governance, and tuning. This means having a detailed understanding of your enterprise risks and agent boundaries including data privacy and content safety, regulatory, financial and brand reputation risks.

The “happy path” requires evaluation-driven development.

Part of the design phase needs to include defining critical evaluation criteria, test cases, and multiple good vs. bad scenarios. Without this, there is no way to reliably improve agents over time, and it’s not safe to deploy them to production.

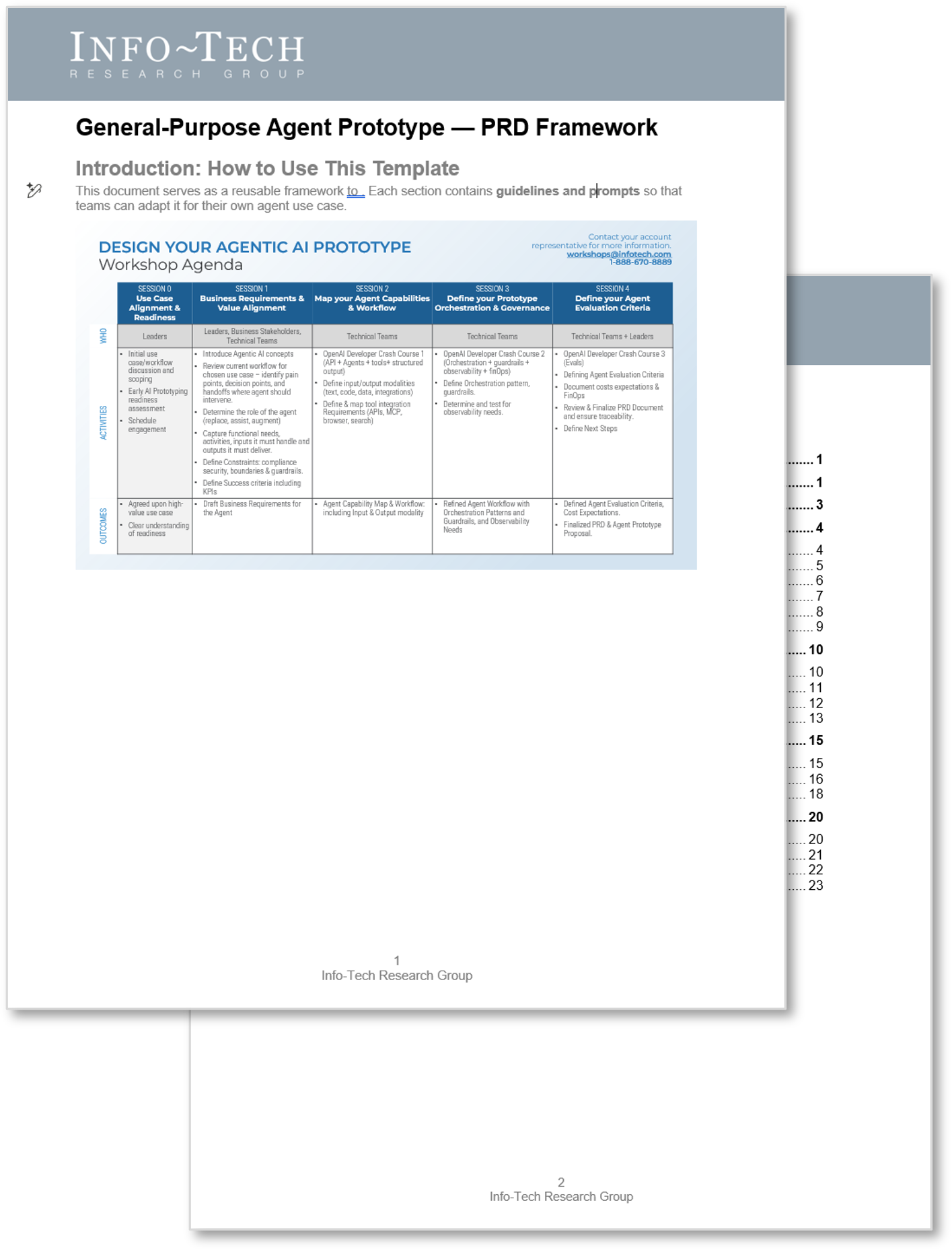

Key deliverable

Info-Tech’s Agentic Product Requirements Document (PRD) helps teams move from an idea for an AI agent to a testable, well-scoped prototype.

Working within the framework of this PRD ensures you’re not just experimenting with an agent, but building it with purpose, safety, and measurable business value front-of-mind.

Move from idea to prototype

The Agentic PRD helps teams move from an idea for an AI agent to a testable, well-scoped prototype. By working through each section, you will:

- Align on the business case: Clearly define the problem, intended users, and measurable outcomes.

- Draw clear boundaries: Specify what is in scope for the prototype and what is deferred to future phases.

- Design with confidence: Choose the right model variant, tools, and knowledge sources while documenting user flows and safety guardrails.

- Plan for accountability: Set success metrics, evaluation methods, and industry-specific compliance checks.

- De-risk delivery: Outline rollout stages, risks, open questions, and implementation priorities so the team can build iteratively and safely.

A complete PRD is a pre-requisite for our technical workshop focused on developing the PRD into a functional prototype.

Blueprint benefits

IT Benefits | Business Benefits |

|---|---|

|

|

Info-Tech offers various levels of support to best suit your needs

| DIY Toolkit | Guided Implementation | Workshop | Executive & Technical Counseling | Consulting |

|---|---|---|---|---|

| "Our team has already made this critical project a priority, and we have the time and capability, but some guidance along the way would be helpful." | "Our team knows that we need to fix a process, but we need assistance to determine where to focus. Some check-ins along the way would help keep us on track." | "We need to hit the ground running and get this project kicked off immediately. Our team has the ability to take this over once we get a framework and strategy in place." | "Our team and processes are maturing; however, to expedite the journey we'll neecd a seasoned practitioner to coach and validate approaches, deliverables, and opportunities." | "Our team does not have the time or the knowledge to take this project on. We need assistance through the entirety of this project." |

Diagnostics and consistent frameworks are used throughout all five options.

Guided Implementation

What does a typical GI on this topic look like?

| Phase 1 | Phase 2 | Phase 3 | Phase 4 |

|---|---|---|---|

Call #1: Define problem statement, personas & KPIs. | Call #2: Map current workflow and set prototype scope. Call #3: Design agent workflow with models, tools, & instructions. Call #4: Optimize agent distribution. Call #5: Choose orchestration pattern. | Call #6: Design guardrails and human-in-the-loop. Call #7: Define successful agent behavior & select success metrics. | Call #8: Create tracing & experimentation plan. Call #9: Identify next steps and finalize documents & approvals. |

A Guided Implementation (GI) is a series of calls with an Info-Tech analyst to help implement our best practices in your organization.

A typical GI is 8 to 12 calls over the course of 4 to 6 months.

Contact your account representative for more information.

workshops@infotech.com

1-888-670-8889

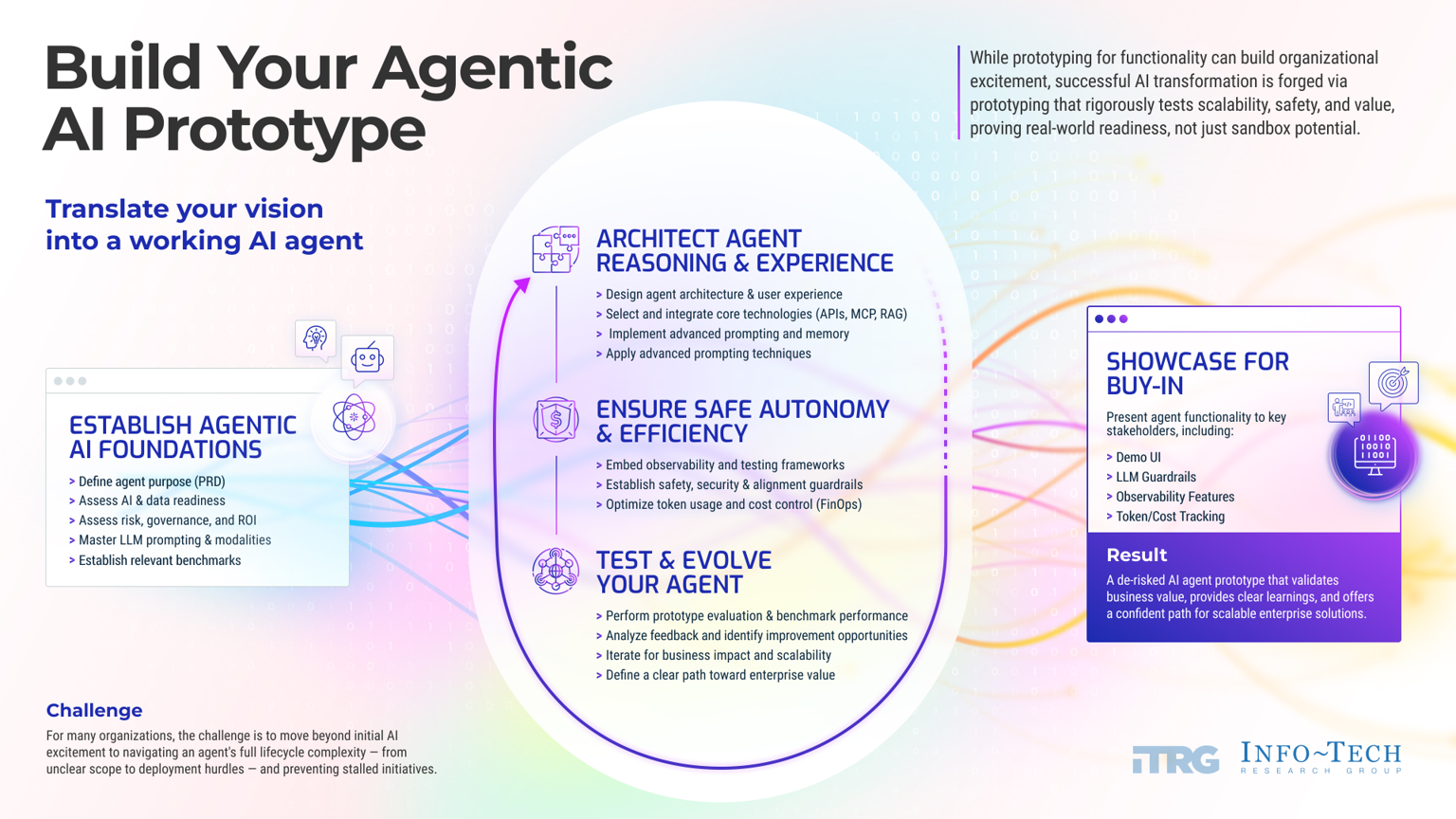

This research covers step one in Info-Tech’s agentic AI prototyping series.

Leverage Info-Tech’s Design and Develop Workshops to rapidly go from vision into a working AI prototype.

| 01 | Workshop 1: Design Your Agentic AI Prototype |

|---|---|

| 02 | Workshop 2: Develop Your Agentic AI Prototype |

DESIGN YOUR AGENTIC AI PROTOTYPE

Workshop Agenda

| SESSION 0 Use Case Alignment & Readiness | SESSION 1 | SESSION 2 | SESSION 3 | SESSION 4 | |

|---|---|---|---|---|---|

WHO | Leaders | Leaders, Business Stakeholders, Technical Teams | Business Stakeholders, Technical Teams | Business Stakeholders, Technical Teams | Business Stakeholders, Technical Teams + Leaders |

ACTIVITIES |

|

|

|

|

|

OUTCOMES |

|

|

|

|

|

Tech Trends 2025

Tech Trends 2025

Define Your Digital Business Strategy

Define Your Digital Business Strategy

Kick-Start IT-Led Business Innovation

Kick-Start IT-Led Business Innovation

Establish a Foresight Capability

Establish a Foresight Capability

Apply Design Thinking to Build Empathy With the Business

Apply Design Thinking to Build Empathy With the Business

Sustain and Grow the Maturity of Innovation in Your Enterprise

Sustain and Grow the Maturity of Innovation in Your Enterprise

Position IT to Support and Be a Leader in Open Data Initiatives

Position IT to Support and Be a Leader in Open Data Initiatives

Double Your Organization’s Effectiveness With a Digital Twin

Double Your Organization’s Effectiveness With a Digital Twin

Develop a Use Case for Smart Contracts

Develop a Use Case for Smart Contracts

Adopt Design Thinking in Your Organization

Adopt Design Thinking in Your Organization

Accelerate Digital Transformation With a Digital Factory

Accelerate Digital Transformation With a Digital Factory

Tech Trends 2024

Tech Trends 2024

2021 Tech Trends

2021 Tech Trends

Implement and Mature Your User Experience Design Practice

Implement and Mature Your User Experience Design Practice

CIO Priorities 2022

CIO Priorities 2022

2022 Tech Trends

2022 Tech Trends

Into the Metaverse

Into the Metaverse

Demystify Blockchain: How Can It Bring Value to Your Organization?

Demystify Blockchain: How Can It Bring Value to Your Organization?

2020 Tech Trend Report

2020 Tech Trend Report

2020 CIO Priorities Report

2020 CIO Priorities Report

CIO Trend Report 2019

CIO Trend Report 2019

CIO Trend Report 2018

CIO Trend Report 2018

CIO Trend Report 2017

CIO Trend Report 2017

AI and the Future of Enterprise Productivity

AI and the Future of Enterprise Productivity

Evolve Your Business Through Innovation

Evolve Your Business Through Innovation

Build a Platform-Based Organization

Build a Platform-Based Organization

Tech Trend Update: If Contact Tracing Then Distributed Trust

Tech Trend Update: If Contact Tracing Then Distributed Trust

Tech Trend Update: If Biosecurity Then Autonomous Edge

Tech Trend Update: If Biosecurity Then Autonomous Edge

Tech Trend Update: If Digital Ethics Then Data Equity

Tech Trend Update: If Digital Ethics Then Data Equity

Tech Trends 2023

Tech Trends 2023

Formalize Your Digital Business Strategy

Formalize Your Digital Business Strategy

Select and Prioritize Digital Initiatives

Select and Prioritize Digital Initiatives

Adopt an Exponential IT Mindset

Adopt an Exponential IT Mindset

Build Your Enterprise Innovation Program

Build Your Enterprise Innovation Program

Build Your Generative AI Roadmap

Build Your Generative AI Roadmap

Annual CIO Survey Report 2024

Annual CIO Survey Report 2024

Drive Innovation With an Exponential IT Mindset

Drive Innovation With an Exponential IT Mindset

Exponential IT for Financial and Vendor Management

Exponential IT for Financial and Vendor Management

Exponential IT for Strategy, Risk, and Governance

Exponential IT for Strategy, Risk, and Governance

Exponential IT for Service Planning and Architecture

Exponential IT for Service Planning and Architecture

Exponential IT for People and Leadership

Exponential IT for People and Leadership

Exponential IT for Security and Privacy

Exponential IT for Security and Privacy

Exponential IT for Applications

Exponential IT for Applications

Exponential IT for Data and Analytics

Exponential IT for Data and Analytics

Exponential IT for Infrastructure and Operations

Exponential IT for Infrastructure and Operations

Exponential IT for Project and Portfolio Management

Exponential IT for Project and Portfolio Management

Assess Your AI Maturity

Assess Your AI Maturity

Develop Responsible AI Guiding Principles

Develop Responsible AI Guiding Principles

Identify and Select Pilot AI Use Cases

Identify and Select Pilot AI Use Cases

Exponential IT Keynote

Exponential IT Keynote

CIO Priorities 2024

CIO Priorities 2024

Build a Scalable AI Deployment Plan

Build a Scalable AI Deployment Plan

Build Your AI Strategy and Roadmap

Build Your AI Strategy and Roadmap

Develop an Exponential IT Roadmap

Develop an Exponential IT Roadmap

Use ChatGPT Wisely to Improve Productivity

Use ChatGPT Wisely to Improve Productivity

Build a FinOps Strategy to Enable Dynamic Cloud Cost Management

Build a FinOps Strategy to Enable Dynamic Cloud Cost Management

Establish a Roadmap for Integrated and Dynamic Risk Management

Establish a Roadmap for Integrated and Dynamic Risk Management

Info-Tech’s Best of 2024 Mid-Year Report: AI Rewrites the Script for CIOs

Info-Tech’s Best of 2024 Mid-Year Report: AI Rewrites the Script for CIOs

Explore the Art of the Possible for Exponential IT

Explore the Art of the Possible for Exponential IT

IT Management & Governance: The Next Evolution

IT Management & Governance: The Next Evolution

Bending the Exponential IT Curve Keynote

Bending the Exponential IT Curve Keynote

Exponential IT in Motion: Transform Your Organization by Transforming IT

Exponential IT in Motion: Transform Your Organization by Transforming IT

AI Trends 2025

AI Trends 2025

LIVE 2024 Keynote Presentations

LIVE 2024 Keynote Presentations

LIVE 2024 Lightning Round Presentations

LIVE 2024 Lightning Round Presentations

CIO Priorities 2025

CIO Priorities 2025

Info-Tech’s Best of 2024 Report: IT Moves Into Position

Info-Tech’s Best of 2024 Report: IT Moves Into Position

Build Your AI Risk Management Roadmap

Build Your AI Risk Management Roadmap

Design Your Agentic AI Prototype

Design Your Agentic AI Prototype

An Operational Framework for Rolling Out AI

An Operational Framework for Rolling Out AI

The AI Vendor Landscape in IT

The AI Vendor Landscape in IT

Run IT By the Numbers

Run IT By the Numbers

Building Info-Tech’s Chatbot

Building Info-Tech’s Chatbot

Assessing the AI Ecosystem

Assessing the AI Ecosystem

Bring AI Out of the Shadows

Bring AI Out of the Shadows

Transform IT, Transform Everything

Transform IT, Transform Everything

Implement AI for Customer Experience

Implement AI for Customer Experience

Info-Tech’s Best of 2025 Mid-Year Report: IT Moves From Disruption to Decisive Action

Info-Tech’s Best of 2025 Mid-Year Report: IT Moves From Disruption to Decisive Action

Implement an AI-Orchestrated Service Desk

Implement an AI-Orchestrated Service Desk

Info-Tech's All-Time Best, 2025 Edition: Change Is the Headline, Fundamentals Are the Path

Info-Tech's All-Time Best, 2025 Edition: Change Is the Headline, Fundamentals Are the Path

Tech Trends 2026

Tech Trends 2026

The AI Playbook

The AI Playbook

AI Trends 2026

AI Trends 2026

Info-Tech’s Best of 2025: The Year AI Stopped Being a Project and Became the Strategy

Info-Tech’s Best of 2025: The Year AI Stopped Being a Project and Became the Strategy

Accelerate AI Literacy and Adoption

Accelerate AI Literacy and Adoption

Publish an Annual AI Performance Report

Publish an Annual AI Performance Report

Develop Your Agentic AI Prototype

Develop Your Agentic AI Prototype