AI is evolving at a dizzying pace. Traditional governance frameworks for safe, responsible, and compliant AI use are struggling to keep up – too static to nimbly adapt when AI technology moves forward, new risks emerge, and regulatory standards change. As agentic AI proliferates, a new, dynamic model of AI governance is needed. Use our step-by-step methodology and practical tools to develop an AI governance roadmap that adapts to shifts in technology, risk, and legislation while aligning with your organization’s values and goals to deliver real impact.

Organizations are searching for a paradigm that enables them to leverage new AI technologies while also governing the new risks that are constantly being introduced. Adaptive AI governance effectively addresses both of those objectives: it doesn’t just mitigate risk – it enables secure innovation that ultimately drives value. Learn how to integrate governance throughout the AI lifecycle, delivering AI governance that grows and adapts with your organization.

1. This involves more than your compliance team.

Adaptive AI governance is not the siloed responsibility of your compliance department alone. It requires governance to be integrated into every stage of developing and deploying AI agents and AI applications. That includes planning and design, data collection and processing, testing and evaluation, monitoring, and decommissioning.

2. AI governance is not one-size-fits-all.

There’s no universal template for adaptive AI governance. The size, maturity, and enterprise governance structure of each organization will influence the specific roles and responsibilities involved in its AI governance program.

3. Humans play a critical role in adaptive AI governance.

Agentic AI involves AI systems performing tasks autonomously. But human beings play important parts in adaptive AI governance, even owning key stages of it. Designers, data scientists, developers, systems integrators, and product managers are just a few of the people who make adaptive AI governance work effectively.

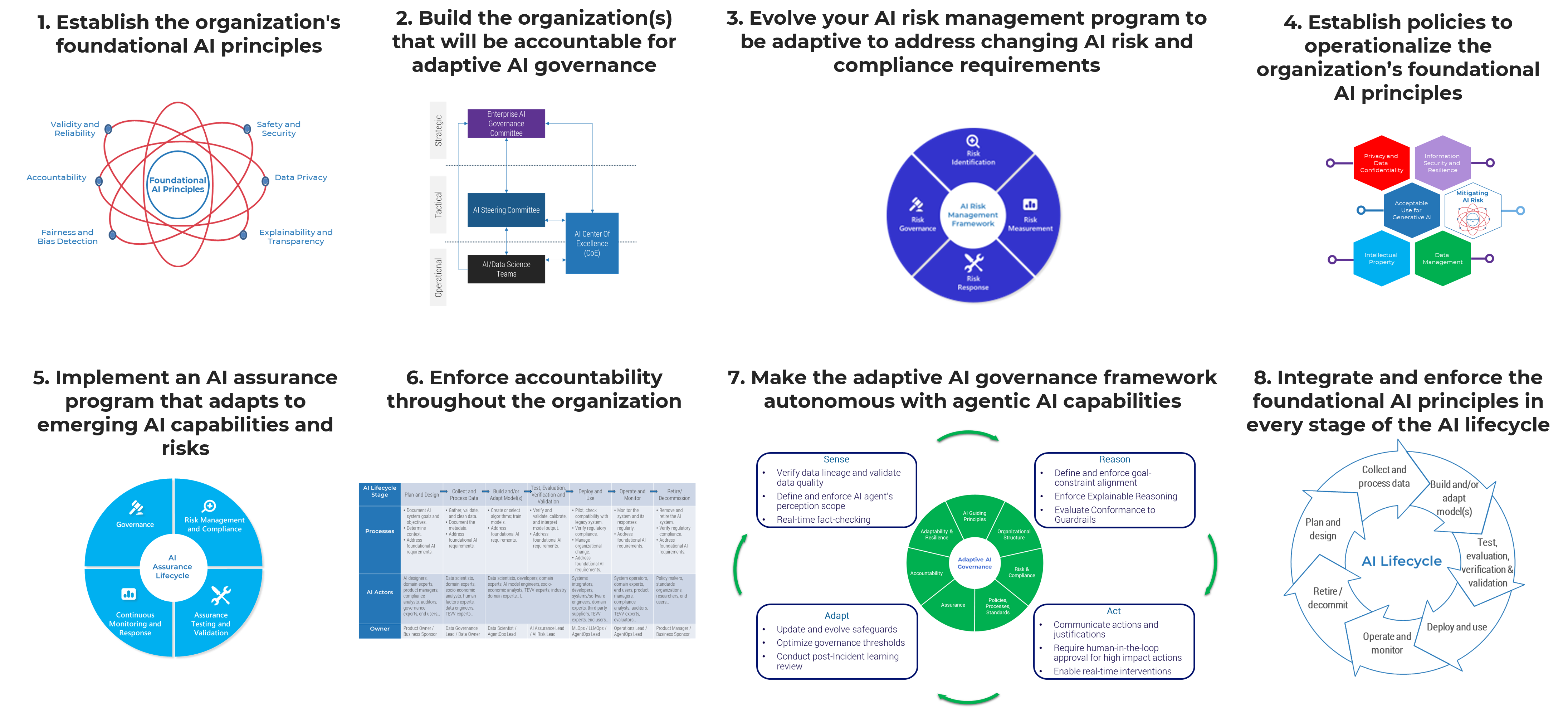

Use this step-by-step blueprint to develop an adaptive governance framework for the safe, responsible deployment and use of AI

Our comprehensive blueprint helps you understand the components of an adaptive AI governance framework and how to customize it for your organization. Use our research and supporting tools to:

- Assess your current AI governance capabilities.

- Establish the organization’s foundational AI principles.

- Establish your organization’s AI governance structure.

- Develop an AI risk and compliance program.

- Implement AI policies to operationalize the foundational AI principles.

- Develop an AI assurance program.

- Ensure accountability is assigned for every AI application.

- Implement an adaptive and resilient AI governance framework.

- Integrate AI governance into the AI lifecycle.

- Build the adaptive AI governance roadmap.

Member Testimonials

After each Info-Tech experience, we ask our members to quantify the real-time savings, monetary impact, and project improvements our research helped them achieve. See our top member experiences for this blueprint and what our clients have to say.

9.5/10

Overall Impact

$54,932

Average $ Saved

25

Average Days Saved

Client

Experience

Impact

$ Saved

Days Saved

Nippon Sanso Holdings Corporation

Workshop

10/10

$79,499

50

Best: Quality of the research in combination with the seniority, knowledge and experience of the Info-Tech analyst conducting the workshop (Areez E... Read More

University of North Texas System

Guided Implementation

10/10

$6,800

10

State of Kentucky - Kentucky Transportation Cabinet

Workshop

8/10

$136K

20

This was a great way to get our team together for focused time with an excellent and engaging facilitator. We were able to better frame our though... Read More

Worldwide Assurance For Employees of Public Agencies Inc

Workshop

10/10

$13,600

29

Sharon was fantastic. Her guidance really helped to get us started on our AI governance program. Great engagement and kept the sessions interesting... Read More

Bloor Homes Ltd

Guided Implementation

10/10

$37,924

5

Citizens Property Insurance Corporation

Workshop

8/10

N/A

N/A

Denis did a good job facilitating AI Strategy and Governance workshop for Citizens Property Insurance. The workshop participants include diverse pr... Read More

Nodak Insurance Company

Guided Implementation

10/10

N/A

50

The team is customer-focused and tailored the experience to exactly what I needed. The value of what was provided felt clear immediately throughout... Read More

Arizona Department of Environmental Quality

Workshop

10/10

$34,000

32

JFK International Air Terminal

Guided Implementation

10/10

$7,000

50

Amazing to have the opportunity to speak with experts in AI and walk through the research & advisory blueprints.

Settlement Services International

Guided Implementation

10/10

$27,000

50

clear direction, precise, quick and always available to drive the AI Strategy paper

Dataprise Inc.

Guided Implementation

10/10

$13,600

20

Trinidad and Tobago Unit Trust Corporation

Workshop

9/10

$74,800

50

The experience enabled us to get the work done in a timely manner , utilizing useful templates.

Walsworth Publishing Company, Inc.

Guided Implementation

10/10

N/A

1

Heritage Petroleum Co Ltd

Guided Implementation

9/10

$5,440

2

Utah Transit Authority

Workshop

9/10

$13,600

20

Oak Valley Health

Guided Implementation

10/10

$9,000

10

Usman is very flexible and listens. using his experience, he brings suggestion and ensures his deliverables are valuable. I really appreciate Usm... Read More

United States Air Force, Air Education and Training Command

Workshop

9/10

N/A

20

The best part of our AI Governance Workshop was the collaboration amongst so many stakeholders within our Air Force Major Command (MAJCOM). Doug Po... Read More

City Of Whitehorse

Workshop

9/10

$10,000

5

The First American Corporation

Guided Implementation

9/10

N/A

N/A

I cannot answer the questions above as I have work to do on my side before they can be answered. The meeting was very helpful and will unblock me.

Veracode, Inc.

Guided Implementation

9/10

N/A

20

Sunflower Bank

Guided Implementation

10/10

N/A

110

Altaz was very knowledgeable, and it was a very good and engaging conversation.

The Salvation Army UK and Ireland

Guided Implementation

8/10

N/A

N/A

We enjoy working with Altaz as he provides us with some great advice and practicable insights and makes us think differently which we value greatly... Read More

City of Leduc

Guided Implementation

10/10

$2,000

5

Kids Help Phone

Guided Implementation

10/10

$2,000

N/A

Dentons Canada Services LP

Guided Implementation

10/10

$10,000

2

Altaz always offers pragmatic and meaningful solutions to problems.

City Of Chesapeake

Workshop

10/10

$34,000

20

Doug effectively guided us through the governance process. Although we had already completed our AI Policy, our CISO mentioned that he gleaned a fe... Read More

NSW Police

Guided Implementation

10/10

$19,800

20

The talk with Julianna allowed me to think AI strategy from different angle, to suit the work environment I work in. Also she went through a few t... Read More

Mencap

Guided Implementation

8/10

$3,699

2

Good session with helpful documents and templates provided

Cairns Regional Council

Guided Implementation

10/10

$10,350

14

No bad parts, Julianna is excellent and the guidance is extremely valuable

Institute of Nuclear Power Operations

Guided Implementation

10/10

N/A

N/A

Very clear and pragmatic advice

Workshop: Establish Your Adaptive AI Governance Program: From Principles to Practice

Workshops offer an easy way to accelerate your project. If you are unable to do the project yourself, and a Guided Implementation isn't enough, we offer low-cost delivery of our project workshops. We take you through every phase of your project and ensure that you have a roadmap in place to complete your project successfully.

Module 1: Review Current AI Strategy and Governance

The Purpose

Key Benefits Achieved

Activities

Outputs

Review the organization's current AI governance model.

Review the organization's existing AI strategy and roadmap.

- An understanding of the organization's current AI governance model and strategy roadmap.

Draw an inventory of applicable legal and regulatory requirements.

- A list of compliance requirements for AI use.

Module 2: Establish Foundational AI Principles and Governance

The Purpose

Key Benefits Achieved

Activities

Outputs

Level-set on the goals of AI governance and current AI governance maturity.

Determine and define foundational AI principles for the organization.

- Foundational AI principles

Identify key strategic, tactical, and operational elements of the organization’s AI governance structure.

Define mandate, roles, and responsibilities.

- Outline of your new AI governance structure and committees

Develop a charter for your executive-level committee.

- Enterprise AI Governance Committee Charter

Module 3: Define AI Governance Framework

The Purpose

Key Benefits Achieved

Activities

Outputs

Identify any risk and compliance requirements.

Assess the organization’s AI risk tolerance.

Recommend AI governance procedure and policy framework.

- Draft AI governance policy and procedure framework

Recommend an AI assurance framework.

Establish accountability structure for AI applications.

- A RACI chart that identifies the roles of different committees in supporting your AI governance initiatives

Module 4: Integrate Governance Into the AI Lifecycle

The Purpose

Key Benefits Achieved

Activities

Outputs

Define AI governance adaptability and resilience requirements.

Define key AI governance requirements for each stage of the AI lifecycle.

- Targets on the required level of AI governance adaptability and resilience

Module 5: Build an AI Governance Implementation Roadmap

The Purpose

Develop a roadmap and communication plan to proactively govern AI.

Key Benefits Achieved

Implement a dynamic, system-oriented, and continuous-monitoring governance model.

Activities

Outputs

Identify AI governance implementation initiatives.

Develop a high-level AI governance implementation roadmap.

- AI governance implementation roadmap

Establish Your Adaptive AI Governance Program: From Principles to Practice

Prepare your organization for agentic AI and tomorrow’s AI technologies and solutions.

Analyst perspective

Bill Wong

AI Research Fellow

Info-Tech Research Group

Valence Howden

Advisory Fellow

Info-Tech Research Group

Doug Powell

Senior Workshop Director

Info-Tech Research Group

Traditional AI governance models are having their relevance challenged as AI continues to evolve at an ever-increasing rate. The world continues to become more interconnected and new AI risks continue to emerge. The introduction of agentic AI, AI systems that can perform autonomously, exposes numerous gaps in traditional AI governance programs. A new paradigm is required for organizations to leverage new AI technologies while governing the risks they introduce.

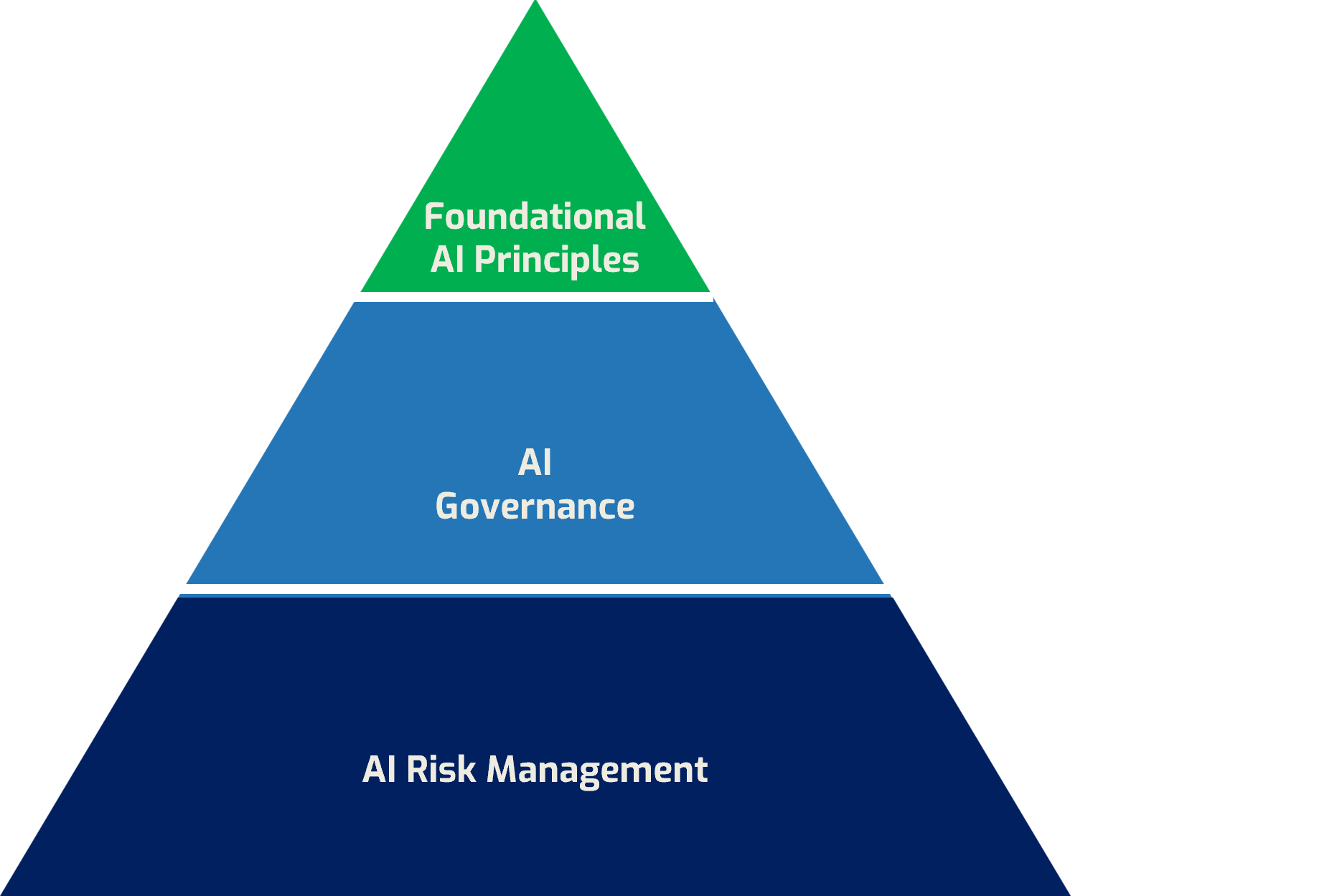

Info-Tech’s adaptive AI governance program offers a framework for the safe, responsible deployment and use of AI. It ensures alignment with the organization’s objectives and values while also adhering to the organization’s foundational AI principles and regulatory obligations. It provides a structured approach to monitoring, operating, and managing the effective and human-centric use and development of AI systems.

Adaptive AI governance will continue to be a subset of enterprise governance, a strategic practice that allows the organization to mitigate potential risks and drive innovation and value.

Organizations challenged with introducing adaptive AI governance programs can start by implementing a traditional AI governance program and evolving it to be more adaptive and responsive. Adaptive AI governance programs will involve collecting more external operational data for the AI system, making use of the latest AI models to reason and orchestrate AI agents, leveraging AI agents to use tools to perform various tasks, and then continuing to adapt by learning from the recommendations and actions performed.

Adaptive AI governance programs integrate governance throughout the AI lifecycle, enabling the organization to proactively uncover possible gaps with respect to governing the AI system. Implementing an adaptive AI governance program improves AI risk identification and governance, alignment to foundational AI principles, and value to the organization.

Executive summary

Your Challenge |

Common Obstacles |

Info-Tech’s Approach |

|---|---|---|

You have been given a mandate to govern how you will responsibly deliver on your AI strategy and target applications. You must:

|

Governance is often viewed as a restrictive and bureaucratic function that impairs innovation. Adaptive AI governance requires full adoption by the developers to the senior stakeholders as an enabler of safe and responsible innovation.

|

Adaptive AI governance represents an evolution from static and project-based governance to a dynamic, system-oriented and continuous monitoring governance model. The goal is to evolve your governance framework::

Develop a roadmap and communication plan to proactively govern AI in your organization. |

Overarching Info-Tech Insight

Organizations considering agentic AI applications will require an adaptive AI governance model that can evolve and respond to the new risks that agentic AI can introduce.

Establish an adaptive AI governance program to address the risks that AI introduces

- Establish foundational AI principles to align with the organization’s strategic principles.

- Build/leverage organizational structures to support the use of AI in a manner consistent with the organization’s governance framework, values, and culture.

- Identify and comply with risk and compliance requirements.

- Establish policies, processes, and standards to operationalize the foundational AI principles.

- Develop an assurance program to monitor and confirm AI applications meet governance requirements.

- Assign roles and responsibilities to ensure the organization is accountable for the use of any AI applications.

- Ensure governance program is resilient and can adapt for AI.

Info-Tech advisory services lead to measurable value

Info-Tech members saved an average of $54,951 and 26 days by working with an Info-Tech analyst on developing an AI governance program.*

Why do members report value from analyst engagement?

- Get expert advice on your specific situation to overcome obstacles and speed bumps.

- Structure the project and stay on track.

- Review project deliverables and ensure the process is applied properly.

* Based on client response data from Info-Tech’s Measured Value Survey, following analyst advisory on BCP.

Benefits of adaptive AI governance

Adaptive AI governance is a dynamic framework that continuously evolves in its response to new risks, technological changes, and regulations. It embeds feedback loops, enabling governance to continuously adapt and evolve.

Organizations with adaptive AI governance:

- Ensure continuous compliance for an evolving regulatory landscape.

- Enable proactive vs. reactive risk management.

- Build resilient and self-healing AI systems.

- Foster a culture of accountability.

- Drive higher AI ROI through continuous improvement.

- Monitor AI systems in real time and govern them appropriately throughout their lifecycle.

Adaptive governance outcomes

Align to Strategy

Investments are dynamically aligned with the organization's strategic objectives.

Optimize Risk

Organizational risks can be proactively discovered, and actions can be taken in real time to minimize impacts.

Deliver Value

Transform governance from cost center to enabler for safe and trustworthy innovation.

Optimize Resource Allocation

Resources are allocated across the organization dynamically to optimize for business value.

Measure Performance

Operational data is continuously monitored, and governance actions can be performed in real time when thresholds are exceeded.

Traditional vs. adaptive AI governance: Evolving from static to dynamic resilience

Traditional AI Governance |

Adaptive AI Governance |

|

Risk Management |

Reactive and Periodic |

Predictive and Continuous |

Policy Enforcement |

Static and Manual |

Dynamic and Automated |

Assurance |

Predeployment Validation |

Continuous Verification |

Accountability |

Diffuse and Retrospective |

Embedded and Real Time |

Adaptability and Resilience |

Rigid and Fragile |

Flexible and Robust |

Value to the Organization |

Defensive and Compliance Focused |

Enabling and Business Value Driven |

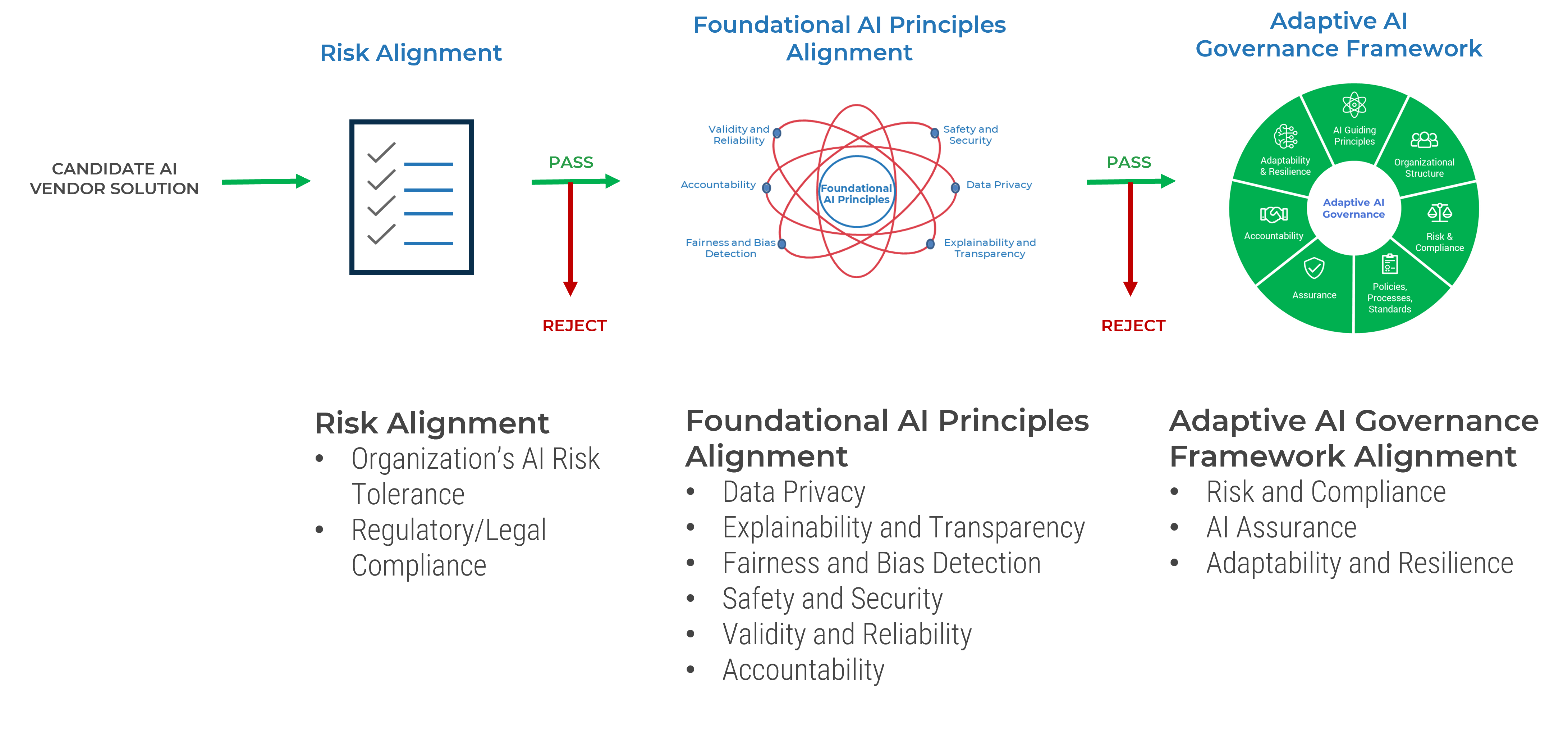

Build your adaptive AI governance roadmap

Applying AI governance for AI vendor solutions

Blueprint deliverables

Each step of this blueprint is accompanied by supporting deliverables to help you accomplish your goals:

AI Governance Maturity Assessment Tool

Conduct a structured review of your current and target governance capabilities.

Enterprise AI Governance Committee Charter Example

Define your Enterprise AI Governance Committee Charter

AI Governance Policy Template

Document AI policies.

AI Policy Worksheet

View AI policies from various institutions.

AI Risk Assessment Worksheet

Assess potential AI use case risks for your organization.

Adaptive AI Governance Executive Presentation

Summarize the key findings of this blueprint and provide your C-suite with a view of your organization’s AI governance challenges and your plan to meet them.

Info-Tech offers various levels of support to best suit your needs

| DIY Toolkit | Guided Implementation | Workshop | Consulting |

|---|---|---|---|

| "Our team has already made this critical project a priority, and we have the time and capability, but some guidance along the way would be helpful." | "Our team knows that we need to fix a process, but we need assistance to determine where to focus. Some check-ins along the way would help keep us on track." | "We need to hit the ground running and get this project kicked off immediately. Our team has the ability to take this over once we get a framework and strategy in place." | "Our team does not have the time or the knowledge to take this project on. We need assistance through the entirety of this project." |

Diagnostics and consistent frameworks are used throughout all four options.

Workshop overview

Contact your account representative for more information.

workshops@infotech.com 1-888-670-8889

Prework |

Session 1 |

Session 2 |

Session 3 |

Session 4 |

|

Activities |

Review Current AI Strategy and Governance |

Establish Foundational AI Principles and Governance |

Define AI Governance Framework |

Integrate Governance Into the AI Lifecycle |

Build an AI Governance Implementation Roadmap |

0.1 Understand the organization’s current AI governance model. |

1.1 Level-set on the goals of AI governance and current AI governance maturity. |

2.1 Identify any risk and compliance requirements. |

3.1 Define AI governance adaptability and resilience requirements. |

4.1 Identify AI governance implementation initiatives. |

|

Deliverables |

|

|

|

|

Phase 1

Assess Your Current AI Governance Capabilities

Step 1.1

Assess Your Current AI Governance Capabilities

Activities

1.1.1 Document the expected benefits of AI governance

1.1.2 Assess your AI governance practices

This step involves the following participants:

- Interested board members

- Executive stakeholders (especially from Legal, Compliance, and Risk)

- AI governance working group

- AI governance–related officials (if any)

Outcomes of this step

- A shared understanding of the goals of AI governance, its benefits, and your organization’s current state with respect to AI governance

Adaptive AI governance challenges

Business Challenges |

Technical Challenges |

|---|---|

|

|

AI Governance frameworks and initiatives

|

EU AI Act |

NIST AI RMF |

ISO/IEC 42001 |

World Economic Forum AI Governance Alliance |

Info-Tech Adaptative AI Governance |

Focus |

Providing a regulatory framework for AI product and market safety |

Providing a risk management framework driven by AI trustworthiness |

Providing an AI certification program driven by responsible AI principles |

Designing practical, actionable guidance and tools for responsible generative AI deployment |

Providing an AI governance framework that reacts in real time to changes in the environment. |

Regulatory Nature |

Mandatory legal obligations required |

Voluntary guidance |

Voluntary standard, certification available |

Voluntary public-private partnership |

Nonregulatory, voluntary guidance |

AI Principles |

Emphasizes safety, fundamental rights, and a high degree of transparency, human oversight, and accountability for high-risk AI |

Structured around trustworthy AI principles: safety, security, transparency, explainability, fairness, accountability, and privacy |

Follows management system principles: risk-based thinking, context of the organization, continuous improvement, and annex |

Centered on safety, trust, inclusivity, and ensuring the responsible deployment of generative AI technologies |

Focuses on foundational AI principles: safety and security, privacy, explainability and transparency, validity and reliability, fairness and bias detection, and accountability |

Framework Adaptability |

Low |

High |

Medium |

High |

High |

Implementation Approach |

Requires a formal conformity assessment |

Organizational guidance (govern-map-measure-manage) |

Focuses on creating an auditable management system |

Leverages a global community to prototype, pilot, and scale governance tools |

Structured and adaptable process |

Key areas of governance responsibility

Strategic Alignment

Ensures that technology investments and portfolios are aligned with the organization’s needs.

Resource Optimization

Ensures that people, financial knowledge, and technology resources are appropriately allocated across the organization.

Value Delivery

Reviews the outcomes of technology investments and portfolios to ensure benefits realization.

Performance Measurement

Monitors and directs the performance of technology investments to determine corrective actions and understand successes.

Risk Optimization

Defines and owns the risk thresholds and register to ensure that decisions are in line with the posture of the organization.

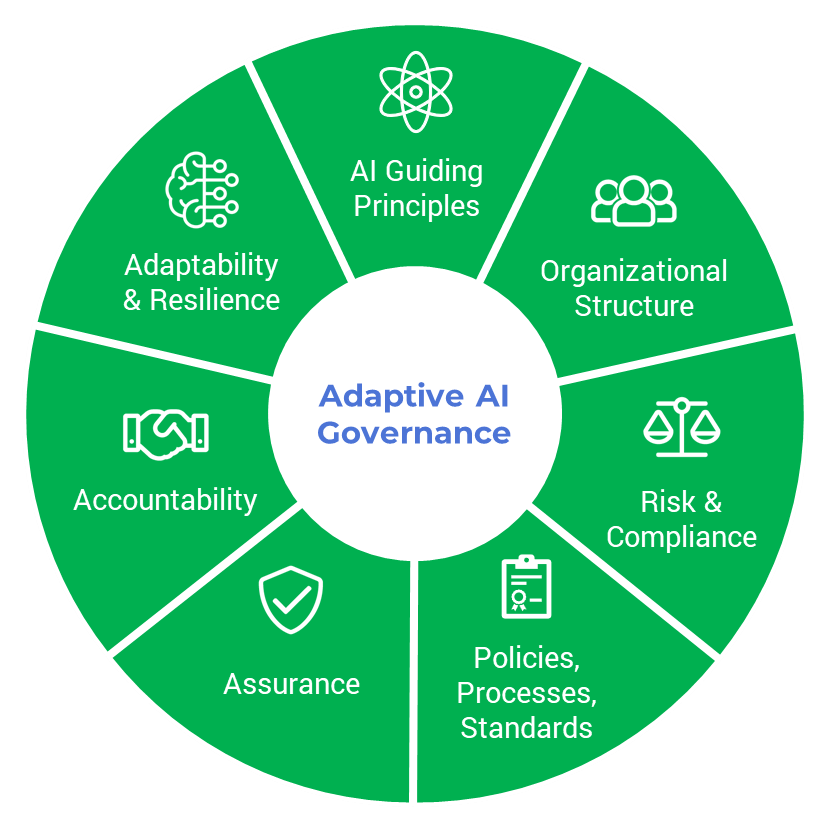

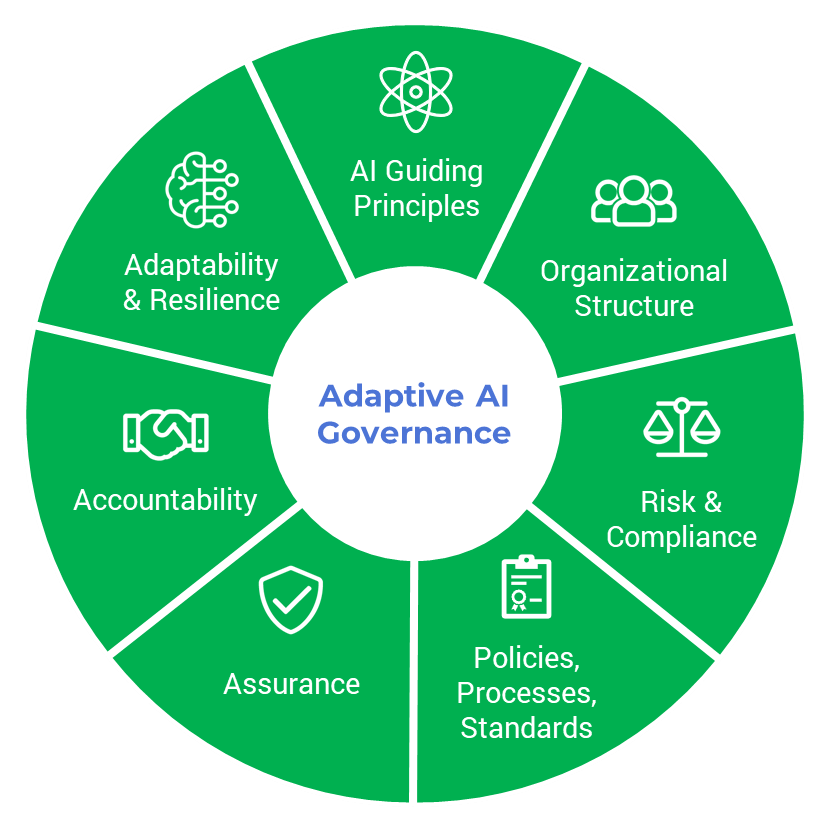

Adaptive AI governance components

Component | Description |

Foundational AI Principles | A core component of an organization’s AI strategy that drives AI governance. The principles should be periodically reviewed and validated to ensure the framework itself remains aligned to organizational goals. |

Organizational Structure | The defined roles, responsibilities, and oversight bodies (like an AI governance committee or the AI Center of Excellence) that establish clear ownership and decision-making authority for AI governance. |

Risk and Compliance | The dynamic processes for identifying, assessing, and mitigating AI-specific risks while continuously complying to evolving legal, regulatory, and internal standards. |

Policies/Processes/Standards | The mandated actionable rules and operational procedures that operationalize guiding principles into daily practice for users, developers, and stakeholders. Control mechanisms are required to support policies, monitor policy adherence, and ensure the policies are updated to reflect changing business context, risk tolerance, and the evolving AI risk landscape. |

Assurance | The independent validation and auditing mechanisms (e.g. impact assessments, certifications) that provide verified evidence and confidence that AI systems are functioning as intended and governed effectively. |

Accountability | The clear assignment of answerability for AI outcomes and decisions, ensuring there is always a designated owner/department responsible for AI system behavior and addressing any harms. |

Adaptability and Resilience | The framework’s adaptability refers to its built-in capabilities to continuously learn, evolve, and update its own rules and processes in response to new technologies, emerging risks, and changing regulations. The framework’s resiliency refers to its capacities to withstand, absorb, and rapidly recover from AI-related failures or disruptions, maintaining operational integrity and ensuring AI systems fail gracefully. |

Five key principles for building an adaptive governance framework

Delegate and empower |

Define outcomes |

Make risk-informed decisions |

Embed and automate |

Establish standards and behavior |

|---|---|---|---|---|

Decision-making must be delegated within the organization, and all resources must be empowered and supported to make effective decisions. |

Outcomes and goals must be clearly articulated and understood across the organization to ensure decisions stay within reasonable boundaries. |

Integrated risk information must be available with sufficient data to support decision-making and design approaches at all levels of the organization. |

Governance standards and activities need to be embedded in processes and practices. Optimal governance reduces its manual footprint while remaining viable. This also allows for more dynamic adaptation. |

Standards and policies need to be defined as the foundation for embedding governance practices organizationally. These guardrails will create boundaries to reinforce delegated decision-making. |

1.1.1 Document the expected benefits of AI governance

Run a brainstorming activity with key stakeholders to confirm why AI governance is needed and what benefits are expected. Use the examples below as a starting point. Document your responses on the next slide.

Why do we need embedded AI governance? What business-critical issues and challenges should better governance address? For example:

- AI is a novel technology that exposes our organization to new risks.

- Our existing governance structures require support to effectively identify, analyze, and implement controls for AI risks.

- We must enable the ethical and responsible use of new AI technologies.

- AI governance cannot exist in a vacuum and must be embedded within our existing enterprise governance model.

- Emergent risks may require a faster response than our existing governance structures permit.

What business benefits do we expect from AI governance? For example:

- Develop new AI solutions with confidence that the solutions will deliver the expected value to our customers and other stakeholders.

- Streamline the AI development process with standards, best practices, and frameworks that avoid costly and time-consuming rework.

- Ensure solutions meet regulatory, contractual, and legal compliance requirements in an evolving compliance landscape.

- Respond appropriately to emerging risks.

Input |

Output |

|---|---|

|

|

Materials |

Participants |

|

|

Optimize IT Governance for Dynamic Decision-Making

Optimize IT Governance for Dynamic Decision-Making

Maximize Business Value From IT Through Benefits Realization

Maximize Business Value From IT Through Benefits Realization

Build an IT Risk Management Program

Build an IT Risk Management Program

Review and Improve Your IT Policy Library

Review and Improve Your IT Policy Library

Establish a Sustainable ESG Reporting Program

Establish a Sustainable ESG Reporting Program

Take Control of Compliance Improvement to Conquer Every Audit

Take Control of Compliance Improvement to Conquer Every Audit

Build an Effective IT Controls Register

Build an Effective IT Controls Register

Integrate IT Risk Into Enterprise Risk

Integrate IT Risk Into Enterprise Risk

The ESG Imperative and Its Impact on Organizations

The ESG Imperative and Its Impact on Organizations

Make Your IT Governance Adaptable

Make Your IT Governance Adaptable

Build an IT Risk Taxonomy

Build an IT Risk Taxonomy

Prepare for AI Regulation

Prepare for AI Regulation

Building the Road to Governing Digital Intelligence

Building the Road to Governing Digital Intelligence

Identify and Respond to Credible Threats Arising From Global Uncertainty

Identify and Respond to Credible Threats Arising From Global Uncertainty

GRC Software Selection Guide

GRC Software Selection Guide

Establish Your Adaptive AI Governance Program: From Principles to Practice

Establish Your Adaptive AI Governance Program: From Principles to Practice

Build an Integrated Enterprise Risk Management Program

Build an Integrated Enterprise Risk Management Program